‘AI does the

Louise Häggström & Clara Neegaard

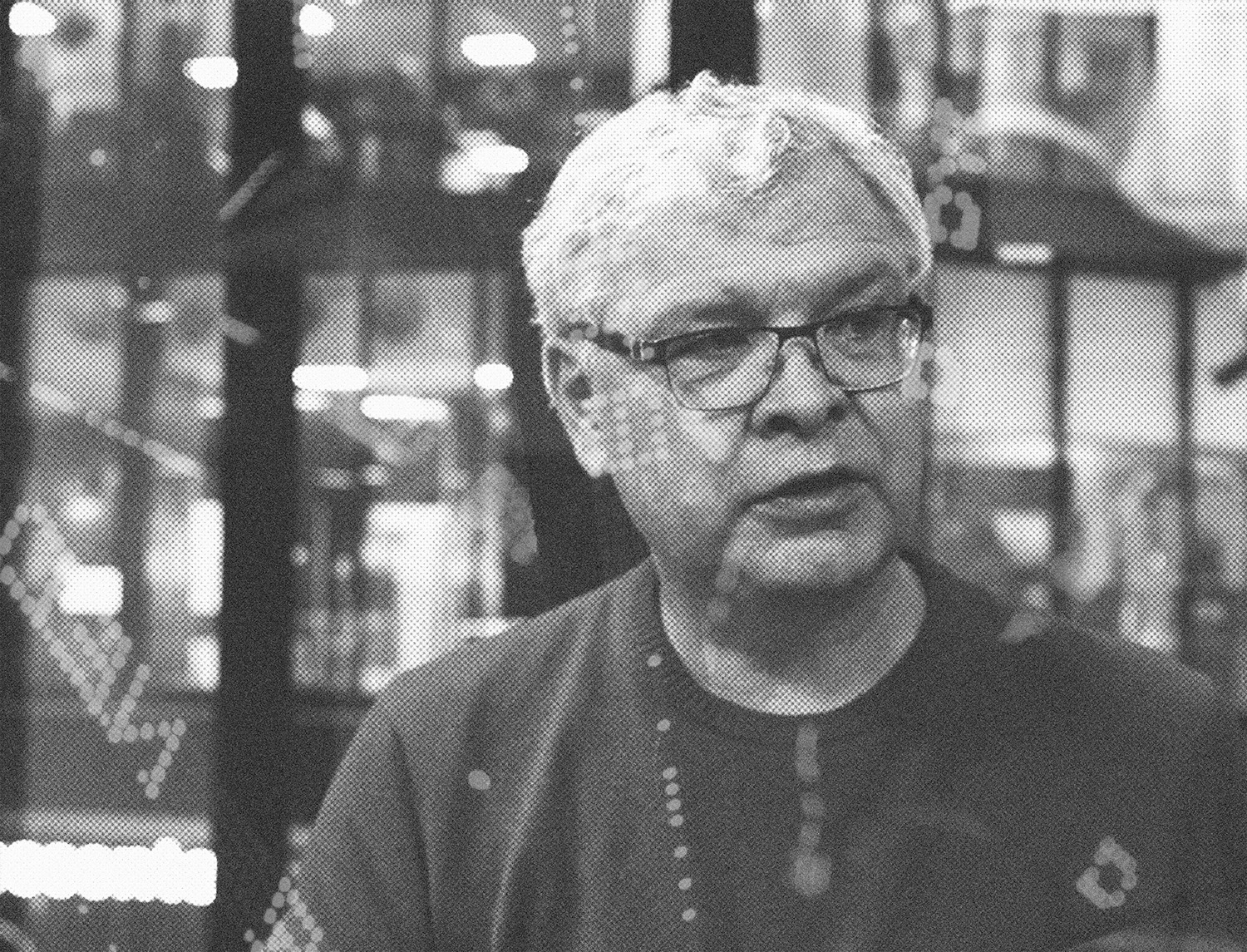

Should people working in the media field be worried that AI and computers are taking over their livelihood? Carl-Gustav Lindén, who works as a professor and researcher, argues that they should only worry if they’re not willing to cooperate with machines.

Computers and artificial intelligence have been a part of the media field for many years. Today, AI is used for simple tasks such as moderating comments and arranging content on the first page of news sites, based on the preferences of the people visiting the site. It’s also used for image recognition and for generating text.

– Many believe that AI is working end to end, that it does everything, but that's not the case. It is specific tasks and processes that can be automated using Al.

Lindén has had an extensive career as a Financial Reporter, and therefore worked with data journalism even before it was a thing. After having obtained his PhD in 2012, he worked as a Consultant and Researcher. In September 2020 he became Professor in Data Journalism at the University of Bergen, where he’s currently working.

You can relax, AI is not taking your job. Linden informs “AI does the things we don’t want to do or cannot do”. It analyses big pieces of data—a job that journalists don't have the knowledge or capacity to do. But there’s still a resistance among journalists when it comes to AI.

– They are very conservative because they have trouble seeing how AI would affect them. They see development more as a threat, which is probably the case in other creative fields as well.

Lindén argues that there is a place for journalists in developing AI. The change is going to happen whether they are on board or not. It’s just not guaranteed that it’s going to be developed to the benefits of journalists if they don’t provide their input.

– It’s not about knowing how to do coding or programming, but about being able to understand and collaborate with people with that expertise. It’s about explaining what you need and understanding what’s possible.

One example that’s often brought up when discussing the dangers of AI-technology is the case of biases. AI is built on data from human activity, which automatically makes it biased.

– We’re all racists even if we don’t believe we are. But the machines see it. Humans are bad trainers for AI because we say one thing and do something else.

To create a society where humans and machines can work together, we need to understand our different roles. Machines do what we don’t want to or are able to do. Humans are here to understand context, and to create quality journalism, meaning and value. One component for a successful implementation of AI is trust in traditional media and journalism—which is rare but something that exists in the Nordic countries.

– The trust for the media in other countries is often weak, and it doesn’t change for the better if AI is built into a system where people already are suspicious.

Finally, what does the future for journalists look like?

– It looks very bright. But we need to play an active part in the development. We can’t compete against a robot; we need to make things even more human. Authenticity, empathy, and other human qualities are becoming increasingly important.